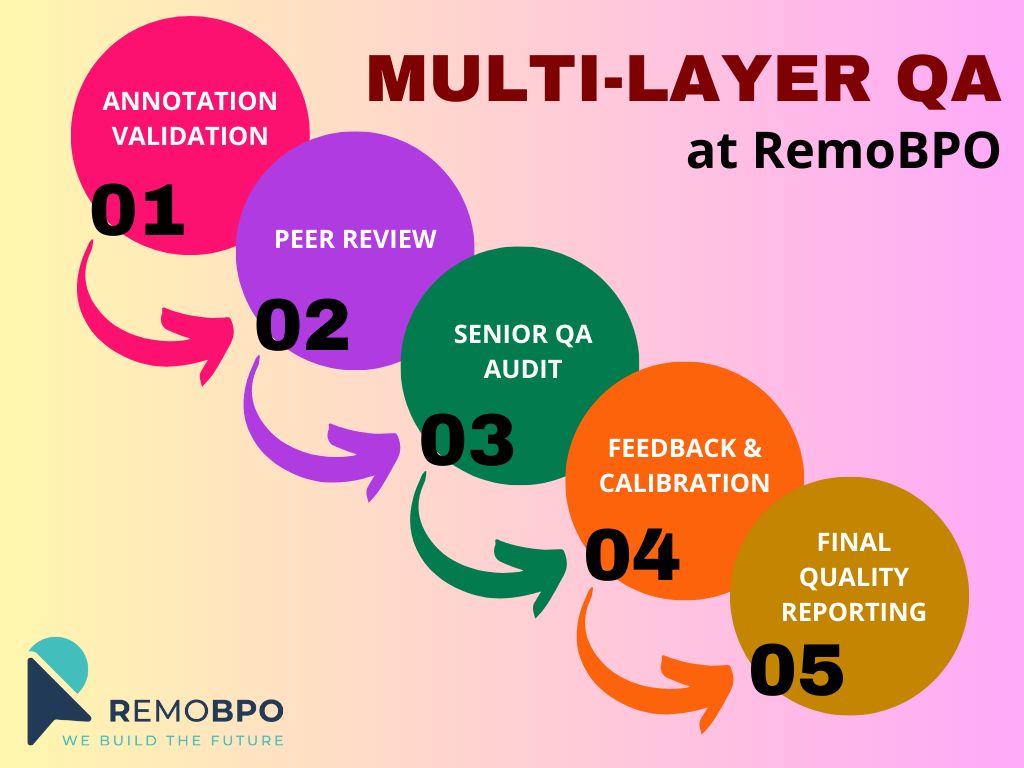

High-quality AI doesn’t come from annotation alone.

It comes from how quality is controlled at every stage.

At RemoBPO, we design QA as a multi-layer workflow, not a final inspection step.

Here’s how it works:

Layer 1 - Annotation validation

Each task is completed following project-specific guidelines, with built-in checks to prevent missing labels, incorrect classes, and format errors.

Layer 2 - Peer review

Annotated data is reviewed by independent annotators to catch human bias, interpretation gaps, and early inconsistencies.

Layer 3 - Senior QA audit

Our QA leads conduct in-depth reviews on random and high-risk samples, focusing on edge cases, class logic, and dataset-wide consistency.

Layer 4 - Feedback & Calibration

Errors are categorized, documented, and converted into training materials. Weekly calibration sessions align all annotators to the same labeling standard.

Layer 5 - Final quality reporting

Clients receive transparent QA metrics, accuracy scores, and improvement tracking.

This layered approach allows us to scale volume without sacrificing precision — delivering stable, training-ready datasets our AI partners can trust.

Because in AI, quality isn't checked once.

It's engineered.